|

These sessions already have a row in ssions, which we will drop just before we update the table. This will happen when a session was still active when the pipeline last ran, or if not all events had arrived yet. There are a couple of important things to note here.įirst, some sessions will have events that were processed in earlier runs. YY% are within the last week so we don't look at older eventsĪND domain_sessionid IN (SELECT id FROM ssion_id ORDER BY 1) in the unstructured event or context tables). In some cases, it’s easier if we can restrict on the event ID, so we don’t need to join to atomic.events to get the session ID (e.g. INSERT INTO ssion_id (ĪND etl_tstamp IN (SELECT etl_tstamp FROM scratch.etl_tstamps ORDER BY 1) Select all session ID that have at least one event in the batches (or batches) that we want to process. If either the pipeline or the SQL break for some reason, the problem will need to be resolved within one week or some batches that still need to be processed will be excluded (the filter can of course be updated if that were to happen). is restrictive enough to speed things up.The default in this case is 1 week, because this is:

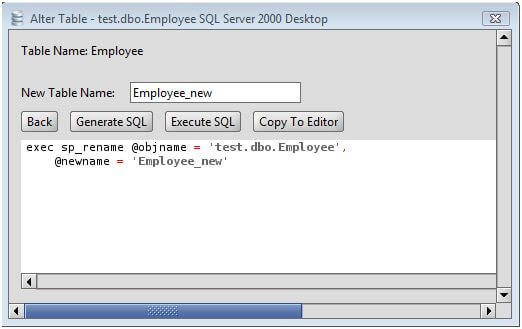

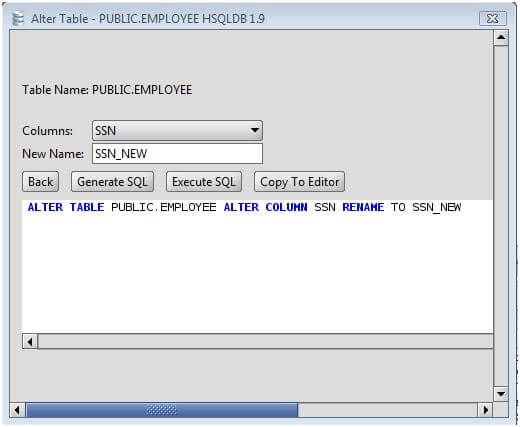

Because the atomic tables are sorted on collector_tstamp, Redshift will be able to skip almost all blocks because the events in them are older than 1 week, therefore returning the results much faster. This same filter will be used in subsequent queries as well. WHERE etl_tstamp NOT IN (SELECT etl_tstamp FROM derived.etl_tstamps ORDER BY 1) return all ETL timestamps that are not in the manifest (i.e. WHERE collector_tstamp > DATEADD(week, -1, CURRENT_DATE) - restrict table scan to the last week (SORTKEY) WITH recent_etl_tstamps AS ( - return all recent ETL timestamps Step 1: select all ETL timestamps that are not in the manifest INSERT INTO scratch.etl_tstamps ( Step 0: truncate the tables in scratch TRUNCATE scratch.etl_tstamps The incremental update happens in a couple of steps. INSERT INTO derived.etl_tstamps (SELECT DISTINCT etl_tstamp FROM atomic.events) ĬREATE TABLE ssions (LIKE ssions) ĬREATE TABLE scratch.etl_tstamps (LIKE derived.etl_tstamps) CREATE SCHEMA IF NOT EXISTS scratch ĬREATE TABLE derived.etl_tstamps (etl_tstamp timestamp encode lzo) DISTSTYLE ALL Next, create a table ( derived.etl_tstamps) that will be used to keep track of which events have been processed. The sessions table needs to exist before we can start updating it, so build the table from scratch one last time. Moving to incremental updates 2.1 Preparation Rebuild the sessions table one last time In the next section, we’ll make this example model incremental. Each time the Snowplow pipeline runs, we create a new sessions table using all events in atomic.events, drop the old table, and rename the new table: CREATE SCHEMA IF NOT EXISTS derived ĪLTER TABLE ssions_new RENAME TO sessions The data model itself is simple, but the queries that make it incremental also work for a more complex data model. We’ll use a simple SQL data model to illustrate how one would go about updating a derived table rather than rebuilding it with each run.

This tutorial instead focuses on improving the performance in Redshift – in particular on how to update a set of derived tables rather than rebuild them from scratch with each run. We have written about this before and it’s something we will keep exploring. One solution is to move the data models out of Redshift and rewrite them to run on Spark instead. However, because the time it takes to build the derived tables goes up with the number of events in Redshift, there will be a point when rebuilding the tables from scratch each time will start to take too long.

For instance, it makes it possible to iterate on the data model without having to update the existing derived tables. These data models often work like this: each time new data is loaded into Redshift, the derived tables are dropped (or truncated) and rebuilt using all historical data. Most Snowplow users load their data into Redshift and implement their data models in SQL (using either SQL Runner or a BI tool such as Looker).

The companies that take full advantage of their Snowplow data all have a data modeling step that transforms and aggregates events into a set of derived tables, which are then made available to end users in the business.įor more information on event data modeling: Loading events into a data warehouse is almost never a goal in and of itself.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed